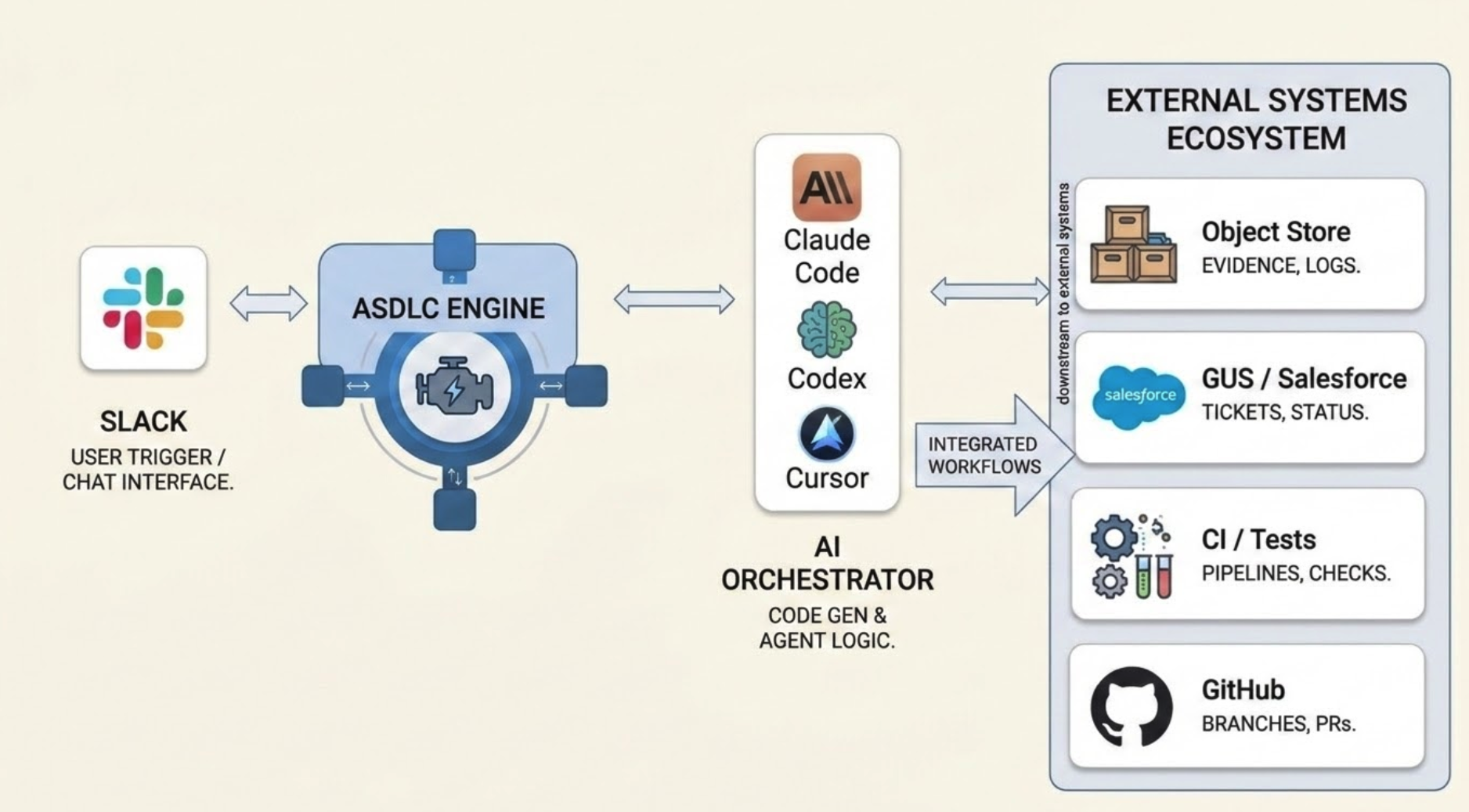

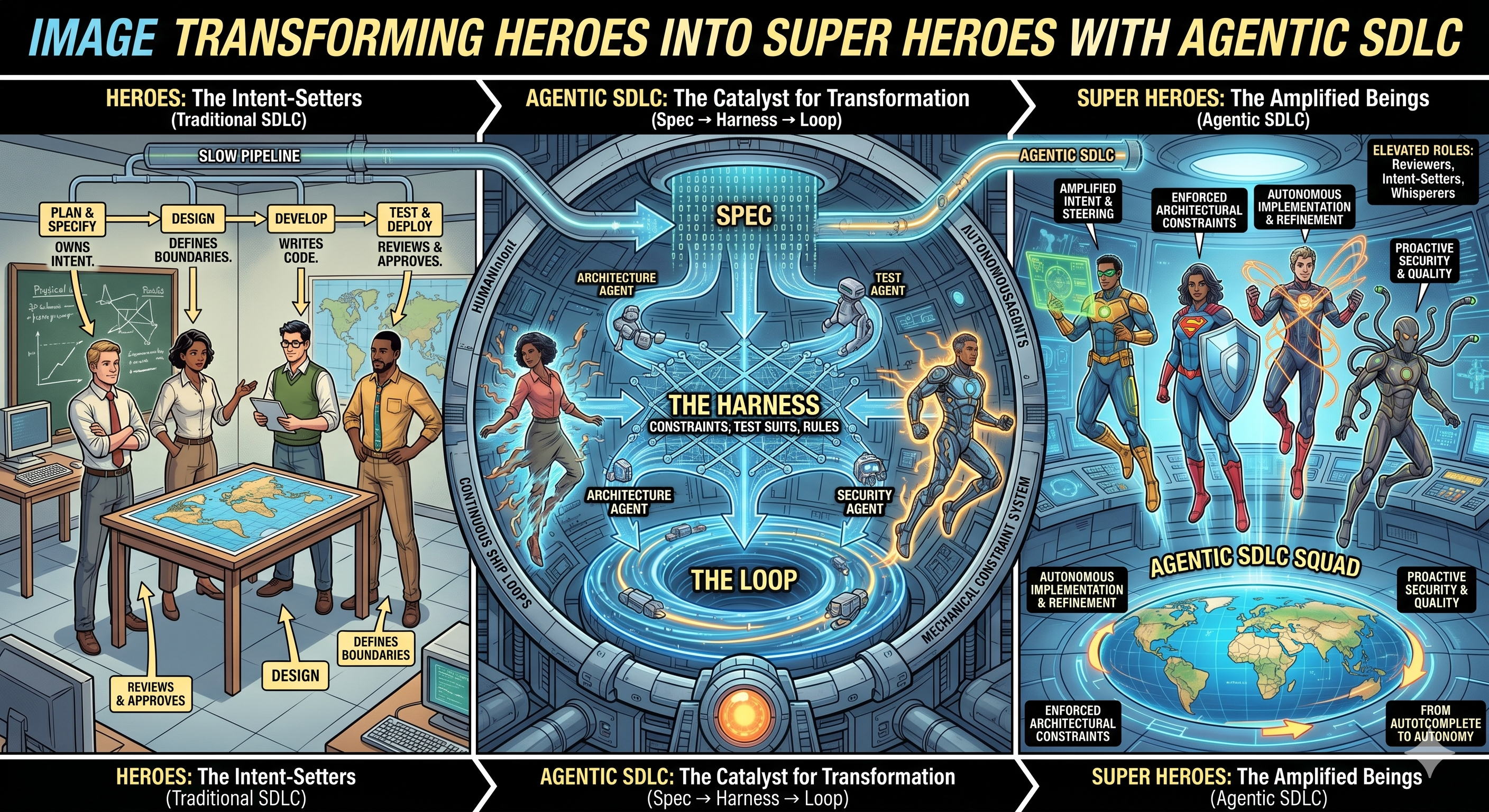

Agentic SDLC is a governed, Slack-first delivery operating model where AI agents help teams move work from idea to production with clearer handoffs, stronger governance, and measurable delivery flow.

Agentic SDLC — end-to-end transformation view

Agents Do Repeatable Work

Requirements formatting, design checklists, test case authoring, coverage checks, status updates, and handoff preparation.

Humans Approve Key Decisions

Business scope, architecture, release gates, production deploys, and incident response stay under human control.

Every Stage Leaves a Trail

Auditable evidence in Slack Canvas, GUS, GitHub, and structured telemetry for process improvement.

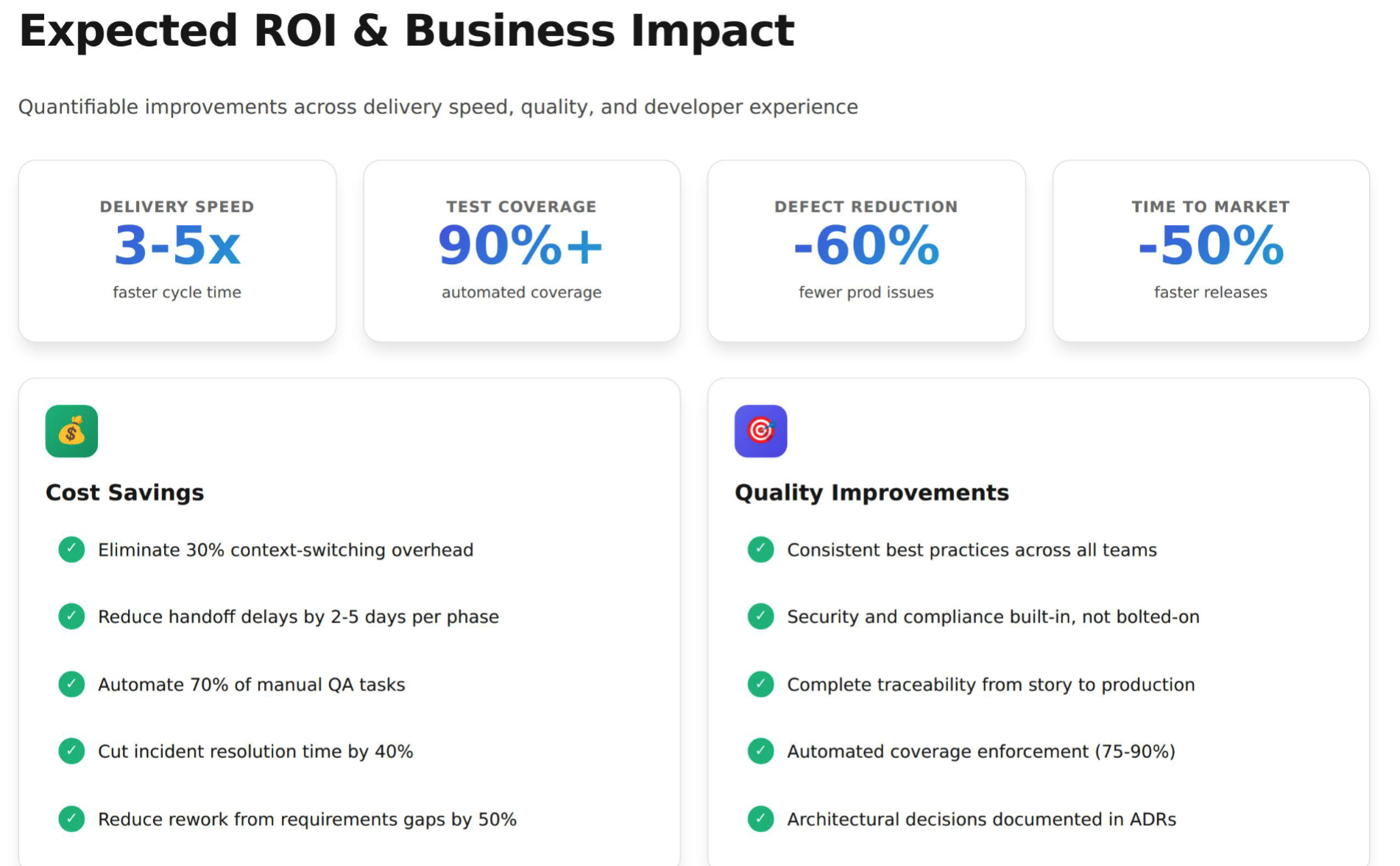

The goal: reduce coordination drag, improve quality, and make delivery measurable end to end.